Mistral Made Me Feel Like It Was 2024 Again(Mistral Hackathon 2026)

Not in a Good Way

I just submitted the Mistral hackathon.

A few hours ago. I built something, shipped something, and now I have thoughts → not about what I built, but about the tool I used to build it.

This is about Mistral. And I’ll try to be fair, even though my feelings are mixed.

I Want European AI to Win

Let me be transparent about where I’m coming from.

I’m Polish. I live in the EU. When it comes to AI, I genuinely want something that is ours. Not out of nationalism - out of choice. If Mistral or another European model reached the level of Claude or the latest from OpenAI, I’d have a real alternative. I’d have competitive pressure. I’d have options that don’t require routing everything through American infrastructure.

That matters to me. More than I expected it to before this hackathon.

So I went in with real goodwill. I wanted to see what Mistral could do on a serious project. I’d used it briefly before, months ago. This was my first time actually depending on it for something I cared about finishing.

Some Things Worked. Then the Friction Started.

There is a CLI, and there’s a vibe coding mode that actually functions. First few prompts? Not bad. I asked, it delivered.

Then I started noticing the friction. Small things. A function that almost worked but needed manual correction. A structure that was 80% right and needed me to fill the rest. A pattern I had to repeat three times before it stuck.

It felt familiar - in the wrong way.

I’ve been working daily with frontier AI coding tools for months. Claude Code, Codex. Tools where you state what you want and mostly get it. Where the model meets you halfway, sometimes more. Where the bottleneck is clarity of thought, not execution quality.

With Mistral, I was doing what I used to do about a year ago. Providing structure manually. Correcting small weird things. Going back and forth more than I wanted to.

It was faster than writing everything by hand. It was useful. But it felt like 2024.

What Frontier Models Changed

Something genuinely shifted in the last year of AI-assisted coding.

With the current top-tier models, if you invest real time into a session and know what you’re building, you can get something production-grade. Not just a prototype with sharp edges. Something that actually holds up.

I’ve been tracking this shift closely - the ROI calculation has changed. The skill that matters now isn’t prompt engineering in the old sense. It’s knowing what you want and stating it clearly. The model handles the rest.

With Mistral, the old constraints came back. You need to understand its quirks. You need to manage it more carefully. I’ve spent months building with CLI-based AI tools, so I know the difference between a skill gap and a tool limitation.

This felt like a tool limitation.

What I Built (And Why I Kept It Simple)

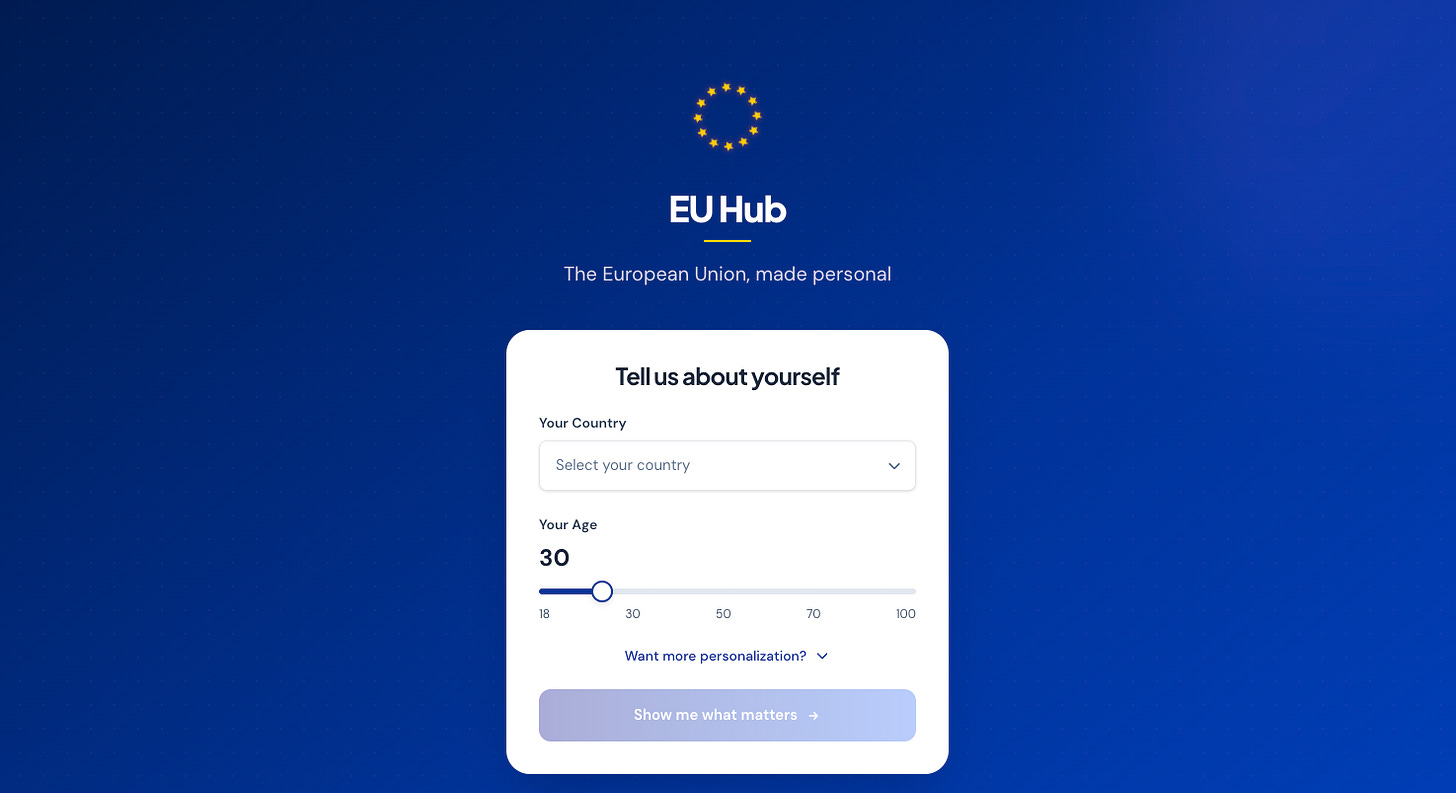

I ended up building a personalized European Union information hub. Statistics, legislation context, data that adapts based on who’s looking at it. Something objective and more transparent than the usual political noise about the EU.

The idea is decent. I think it has real potential.

But the depth I reached was limited. Not by time - I had enough. Limited by the tool. When you’re correcting and adjusting and going back and forth, you don’t push into complex territory. You scope down to what you can confidently finish.

I’ve noticed this before: the model determines how ambitious you dare to be. When the model is capable, you aim higher. When it’s not, you protect yourself by keeping the project smaller.

That’s what I did here. And I’m a little disappointed about it.

The Broader European AI Picture

Mistral isn’t the only European attempt worth mentioning. I also tested Bielik -- the Polish LLM available on Hugging Face. I appreciate that it exists. But it’s not close to Mistral, and Mistral isn’t close to the frontier.

There are open-source models, models you can run locally if you have GPU power, models from various European research teams. Activity and investment are real. But right now, in early 2026, nothing European competes with the top American models for real coding work.

And the frontier is not slowing down.

The gap that feels uncomfortable today could become a structural problem if Europe doesn’t accelerate. I want that to change. I’m genuinely rooting for it to change. We deserve a competitive AI ecosystem, not just a symbolic presence in it.

My Honest Conclusion

The hackathon was fun. Building under pressure, even with friction, is energizing. I like the sprint format. I’d do another one.

But if I’m being real: I wouldn’t choose Mistral for serious production work right now. Not when the alternative lets me think bigger and execute faster with less overhead.

Mistral has improved since I last used it. The trajectory is right. The foundation is there. It’s just not enough yet -- and “not enough yet” in a field moving this fast is a real problem.

I want to root for European AI. This hackathon made it harder to do uncritically.

If Mistral reads this: please go faster.

I write about building with AI, automation that actually runs, and what it’s like to use these tools at the edge of what they can do. If that’s interesting to you, consider subscribing -- it’s free, and the occasional paid post funds the experiments.

If you’re curious about how I run AI agents overnight while I sleep, the Night Shift Playbook covers the exact system. $19, or free for paid subscribers.

Great