88% of Companies Use AI. Only 6% See Real Results.

Here’s What’s Actually Missing.

I live in an AI bubble. I know that now. The gap between what I do with AI every day and what most professionals experience is not a small gap. It’s a canyon. And the latest research from Anthropic, McKinsey, and Salesforce just put hard numbers on how wide it really is.

I wrote about living inside an AI bubble a while back. The moment it clicked was a conversation with my neighbor. She’s a professional developer. Uses Gemini sometimes. Had never heard of Claude, never used an AI agent, didn’t know what autonomous coding meant. She’s a smart, capable engineer. She just wasn’t in the same world I was in.

That conversation stuck with me. Because if a developer can be that far outside the AI bubble, what does it look like for a sales manager in a mid-size company? Or a recruiter at a 50-person firm? Or a marketing director who’s been told to “use AI more” but hasn’t been shown what that actually means?

I started paying attention. I talked to people. A friend in sales told me he uses ChatGPT to “clean up emails sometimes.” A recruiter I know said her team bought an AI screening tool, used it for two weeks, then went back to manual review because nobody understood the output. A marketing manager at a conference told me his whole team’s AI usage was basically: paste text, get summary, paste more text, get another summary.

This is not what AI adoption looks like. This is what the appearance of AI adoption looks like.

Then the data showed up to confirm what I was seeing.

The Numbers

88% of organizations now use AI in at least one function. That’s McKinsey’s latest State of AI report. Sounds like the race is over, right? Except only 6% of those companies qualify as “AI high performers” who actually see meaningful business impact. Only 39% report any EBIT impact at all, and for most it’s less than 5%.

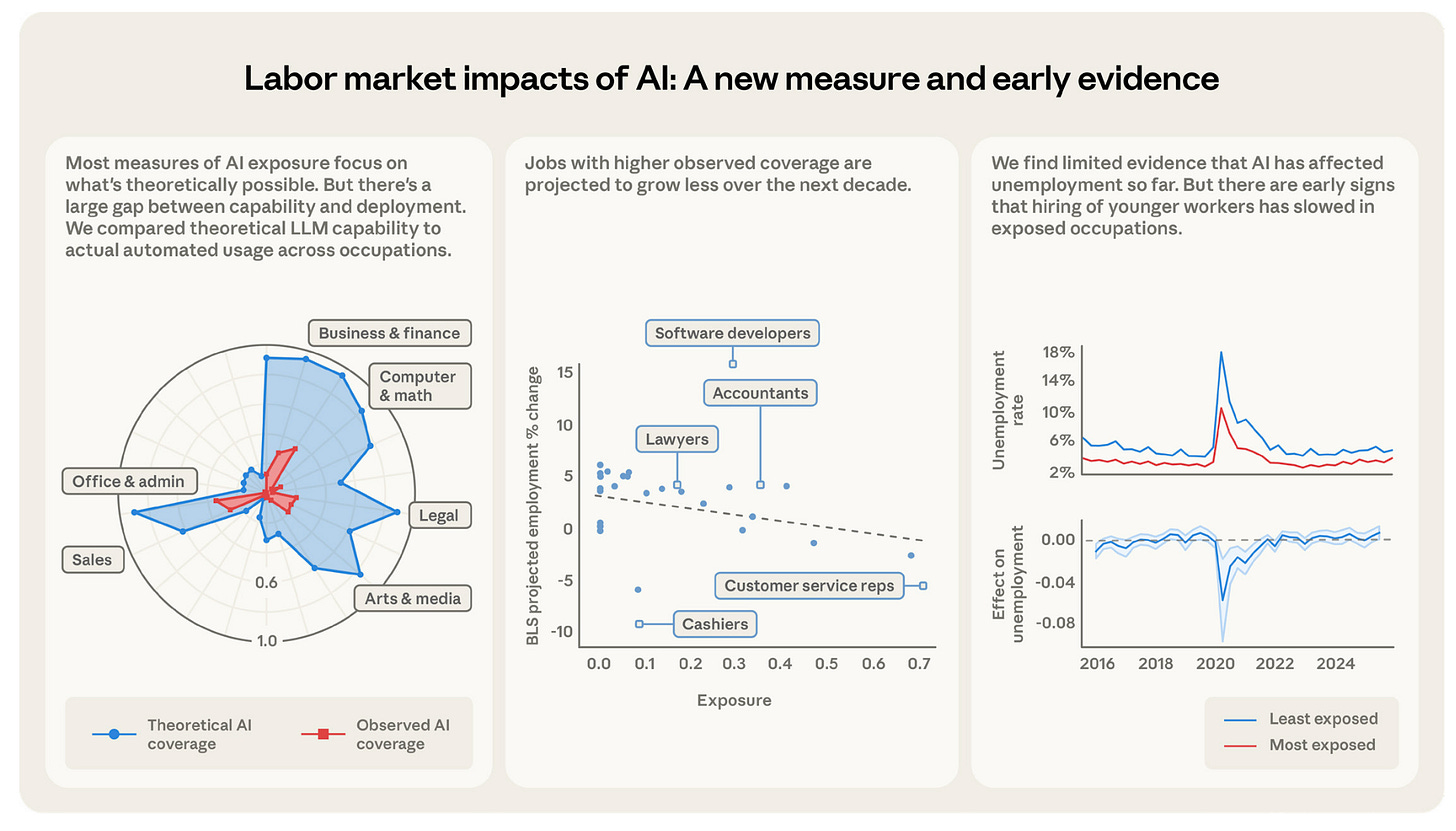

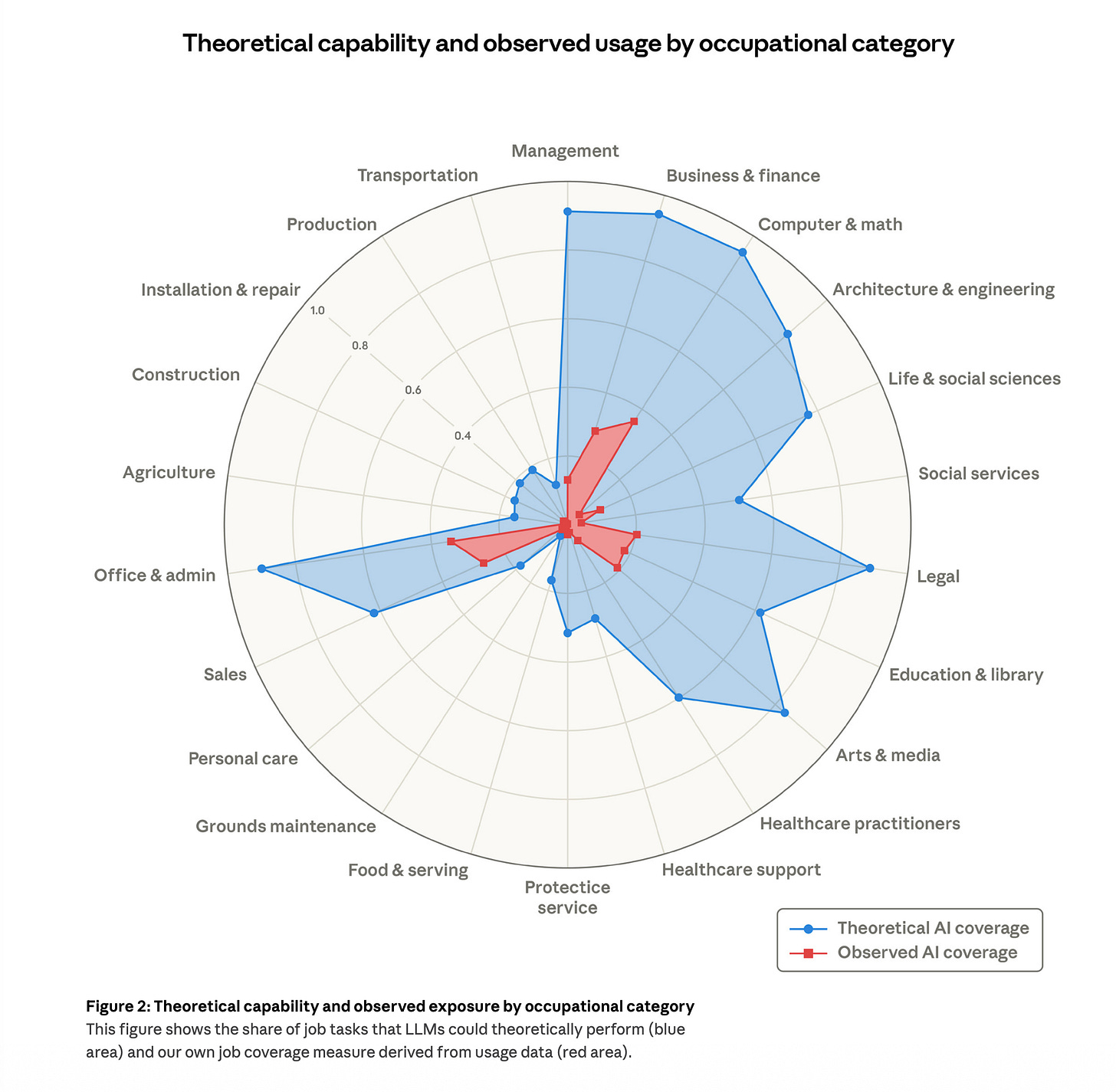

Then Anthropic dropped a new labor market study on March 5, 2026. They created a metric called “observed exposure” that measures actual AI usage in specific jobs, not theoretical capability. The gap between what AI could do and what it’s actually doing is staggering:

Computer and math occupations: 94% theoretical exposure, 33% actual. A 61-point gap.

30% of all workers have essentially zero AI coverage in their daily work.

The average “observed exposure” across occupations is dramatically lower than any prediction model suggested.

36.8% of individuals across the OECD used generative AI in 2025. But firm-level deployment? Only 20.2%. And the size gap is brutal: 52% of large companies use AI versus just 17.4% of small ones. If you work at a company with under 50 people, the odds are stacked against you even having access to proper AI tools, let alone knowing how to use them.

Two-thirds of companies remain stuck in pilot or experimentation phases. They proved AI can do something. They never figured out how to make it do something that matters.

Profession by Profession, the Same Pattern

Sales. 62.8% observed AI exposure in the Anthropic data. Adoption jumped from 24% in 2023 to 43% in 2024, with 56% of sales pros now using AI daily. Although those numbers sound solid, most of that usage is surface-level: drafting emails, summarizing calls. The strategic work that actually moves deals, things like pipeline analysis, objection handling scripts, personalized outreach sequences, barely happens. 20% of sales activities are already automatable with current tools, but most organizations haven’t implemented any of it.

Marketing. 64.8% observed exposure. 75% of marketers adopted AI tools. But according to Salesforce’s 2026 State of Marketing report, 84% still send generic one-way campaigns. Let that sit for a second. Three out of four marketers have AI. Five out of six still can’t personalize their campaigns. 98% hit personalization obstacles. Data quality is the number one culprit, but the bigger issue is that nobody showed them how to bridge from “I have a tool” to “I have a workflow.” Only 58% have access to their own service data, 56% to sales data, 51% to commerce data. The data is siloed. The AI can’t fix organizational problems.

HR and recruiting. This is the most interesting case because the gap between adoption and satisfaction is the widest. 87% of companies use AI somewhere in recruitment. 93% plan to increase. But only 43% of HR teams have broadly adopted AI, and just 17% describe their implementation as “highly successful.” That’s a 26-point gap between having it and it working. The highest dissatisfaction ratio of any function I found in the data. Most teams struggle with screening accuracy and bias, two things that matter enormously in hiring and are hard to get right without structured prompts.

Office and admin. The sharpest acceleration: adoption jumped from 26% to 53% in one year. Executive assistants hit 65%. Although that sounds like empowerment, the US is projected to lose roughly 1 million administrative jobs by 2029. Much of this adoption reflects replacement, not augmentation. The people still in these roles need to become the ones who direct AI, not the ones being replaced by it.

Legal. A size problem. 39% of firms with 51+ lawyers use generative AI, but only 20% of firms with 50 or fewer. Nearly 2x gap based purely on firm size. Smaller practices face high theoretical AI exposure but lack the resources to figure out implementation. Same technology, wildly different access depending on where you sit.

L&D and training. 60% of US teachers use AI in 2026. But corporate L&D teams are behind. The irony is thick: the people responsible for training others on new skills are themselves undertrained on the biggest skill shift in a generation.

The Real Barrier: Nobody Showed Them How

50% of organizations cite lack of skilled professionals as their top obstacle. That’s number one. Ahead of data quality, ahead of legacy systems, ahead of governance concerns.

And this is the number that explains everything: only 13% of workers have received any AI training at all. 77% of employers say they plan to upskill their workforce. 13% actually did.

Companies bought the tools and forgot to teach anyone what to do with them. Of course adoption is shallow. Of course 84% of marketers are still sending generic campaigns. Of course HR teams with AI screening tools go back to manual review after two weeks.

I’ve spent over 1,000 sessions learning how to give AI good instructions. The difference between a vague prompt and a structured brief is not small. It’s the difference between “write me an email” and getting generic slop versus “here’s my prospect’s industry, their main objection, our value proposition, and the tone I need” and getting something I can actually send. That kind of specificity takes practice. I learned it through running experiments, through hundreds of iterations, through failing and adjusting.

Most professionals don’t have months to figure this out. They have a job to do right now. They need someone to say: here’s the prompt for your specific task, here are the fields to fill in, here’s what to do with the output. That’s it.

What Actually Closes the Gap

The 6% of companies seeing real results aren’t using better AI. According to McKinsey, they’re 3x more likely to have strong senior leadership driving adoption. They spend 5x more on implementation (not tools, implementation). And 50% of them redesign actual workflows rather than bolting AI onto existing processes. High performers view AI as a growth tool. Everyone else views it as a cost-cutting experiment.

But here’s the thing. Individual professionals can’t wait for their company to become one of the 6%. That could take years. By then the competitive landscape has shifted.

The Anthropic data shows young workers aged 22-25 already seeing a 14% decline in job starts in AI-exposed occupations. This is happening now. The entry-level pipeline is shifting. The workers who learn to direct AI effectively are the ones who stay valuable. The ones waiting for corporate training programs are taking a genuine risk.

Even in technical fields where AI tools are most mature, the gap between casual use and effective use is enormous. For non-technical professionals, that gap is wider because fewer people are showing them the path.

The fastest path for an individual: pick one workflow you do every week. Learn to do it with AI. Get good at that one thing. Then expand. Don’t try to transform everything at once. That’s how companies fail. Start with one task.

What I Built to Help

I’ve been building digital products and tracking their impact for months now. And I keep coming back to the same realization: the technology is ready. People aren’t, because nobody gave them a starting point that’s specific enough to be useful.

That’s why I put together the Professional AI Workflow Playbooks. Six editions, each targeting one of the professions where the adoption gap is widest and the opportunity is biggest:

Sales Edition — Cold outreach builder, objection handler, discovery call prep, proposal drafter, follow-up sequences, pipeline analyzer. Six interactive tools that build prompts from your actual deals and prospects.

Marketing Edition — Content briefs, email campaigns, ad copy for every platform, persona building, SEO optimization, campaign reporting. Six tools that turn your strategy into executable prompts.

HR & Recruiting Edition — Job descriptions, resume screening (with built-in bias warnings), interview questions, candidate communication, onboarding plans, policy drafting.

Legal Edition — Contract review, clause drafting, legal research, client communication, compliance checking, case analysis.

L&D Edition — Training design, needs assessment, learning paths, course content, evaluation frameworks, stakeholder reports.

Office & Admin Edition — Meeting management, process documentation, communication drafting, data analysis, project coordination, policy writing.

Here’s how they work. Each playbook is a single HTML file. You open it in your browser. That’s it. No installation. No login. No account. No code. You fill in your context (your company, your role, your specific situation) and it builds the prompt for you. Copy, paste into ChatGPT or Claude or Gemini, and you get output that’s actually relevant to your work.

Three levels of depth: Level 1 gives you ready-to-use prompts for 6 common tasks in your field. Level 2 sets up a persistent AI workspace tuned to your role. Level 3 shows you how to automate recurring workflows with tools like Zapier.

One thing I want to be clear about: everything runs locally on your computer. There’s no server. No data collection. No analytics. Your profile, your inputs, your generated prompts, all of it stays in your browser and never leaves your machine. In an era where people are justifiably nervous about feeding company data into AI tools, I think that matters. The interactive toolkit is 100% offline-capable. You could disconnect from the internet and it would work exactly the same way. The only time data leaves your computer is when you choose to paste a prompt into an AI tool, and that’s your decision to make.

I’m not going to pretend these solve everything. They don’t replace organizational change. They don’t fix data silos or legacy systems. But they close the gap between “I know AI exists” and “I know exactly how to use it for my specific job, today.” That gap is where the vast majority of the workforce is stuck right now.

Five days. One tool per day. That’s the suggested path. By the end of the week, you’ve built the habit and you know whether structured AI prompting makes a difference for your work.

The AI Workflow Playbooks are available at wiz.jock.pl/store. Six editions: Sales, Marketing, HR & Recruiting, Legal, L&D, and Office & Admin. $79 each. Paid subscribers to Digital Thoughts get free access to all of them.

If you want to start somewhere, pick the edition closest to your role. Try one tool. That’s all it takes.