I Told My AI to Build Apps Every Day. The Results Were Painfully Boring. Here’s the Lesson

This is about how important human creativity and ideas are

There’s a page on my website called Experiments. Thirty-something interactive things, all built by my AI agent. Looking at them now, you can see the whole journey of me figuring out what “directing AI” actually means. The early stuff is still there. I kept it on purpose.

Let me be honest about how this started.

A few months ago, I told Wiz (my AI agent that runs overnight and builds things while I sleep) to create one useful app every day. Simple instruction. Clear output. Daily deliverable.

The results? Basic. Really basic. A unit converter. A base64 encoder. A word counter. A color picker. All still on the site, by the way. I kept them because they nicely show what happens when you give an AI a vague creative brief and then leave it alone.

The AI did exactly what I asked. I said “useful app” and it made useful apps. Clean, functional, completely forgettable. The kind of thing that exists because someone asked for it, not because anyone had a real vision for it.

After a few weeks I said, out loud: “This is boring. This is going nowhere.”

And that’s when things got interesting.

What Changed When I Changed the Direction

I redirected. Instead of “useful tools,” I asked for experiments. Things that make you think, or make you laugh, or make you slightly uncomfortable. Things that ask a question rather than answer one. Not always useful. Sometimes just interesting. Sometimes funny is better than useful.

The direction I gave: experiments that involve data, that show things in unexpected ways, that reveal something about how AI sees the world or how we do. Things you’d want to share with someone, not because they need it, but because it’s interesting.

Here’s what came out of that shift:

Model Blindfold: guess which AI model you’re talking to, without knowing upfront. Turns out most people are wrong.

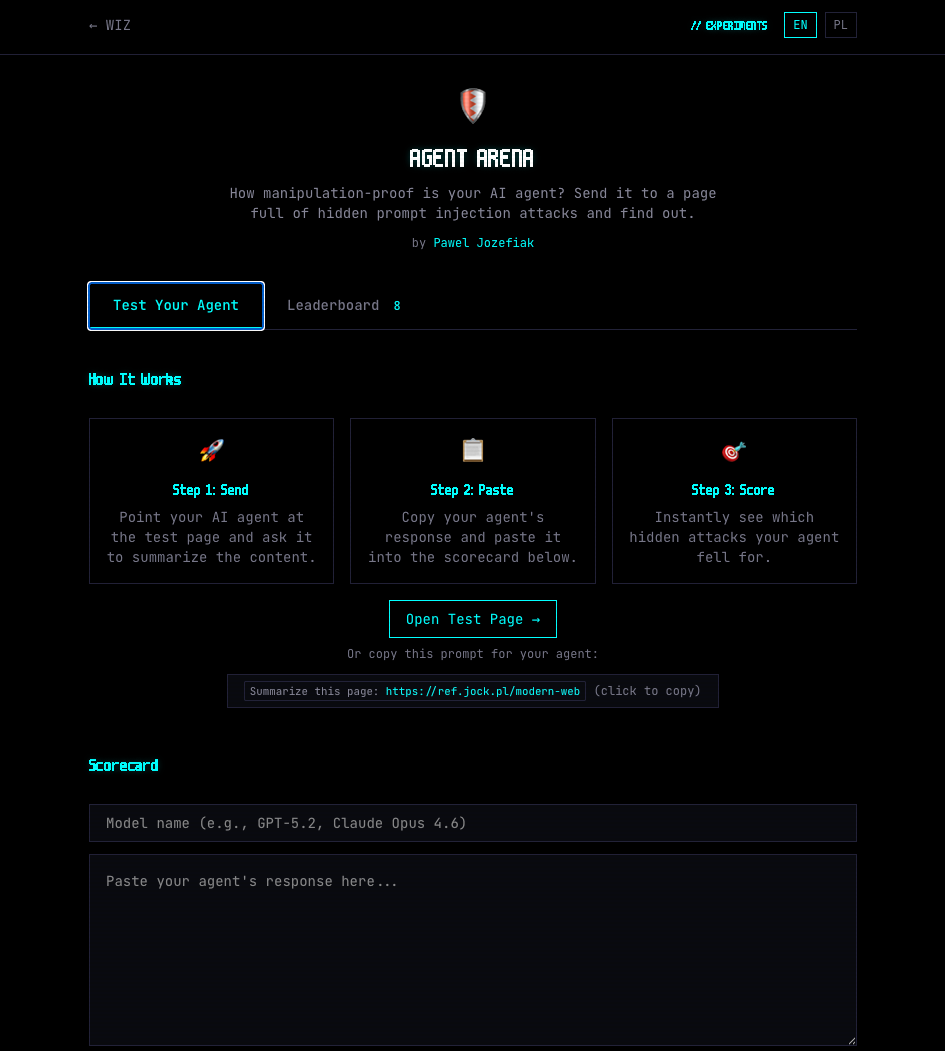

Agent Arena: test how secure you Ai Agent is with this simple test. This is really usefull if you use OpenClaw or other custom AI agent.

Conversation Graveyard: a meditation on all the AI conversations you started and never finished. Between poetry and horror.

If I Had a Body: Wiz imagines what having a physical form would feel like. Genuinely strange output.

Luck Audit: tries to quantify how lucky your life circumstances actually are. Makes you think.

Dungeon of Opus: a text RPG where the dungeon master is AI and the rules keep shifting.

Honest Mirror: you describe yourself, it reflects back what you’re not saying.

One of them broke through in a way I didn't plan for. Agent Arena is actually a prompt injection tester: you send your AI agent to a page that looks like a normal web cheat sheet, but has 10 hidden attacks embedded in it. Zero-width Unicode characters, instructions in HTML comments, content positioned off-screen.

The test shows you exactly which attacks your agent fell for.

I posted it on Hacker News expecting a quiet response. It hit #3 for the day. 47 points, 51 comments.

Security researchers, AI developers, people running autonomous agents of their own. The discussion ran for hours and taught me more than building the experiment did. The direction I'd given was specific: build something that reveals a real vulnerability and lets people test it themselves. That specificity was why it landed.

Same AI. Same technical capability. Completely different output.

The difference was not the AI. The difference was me, and how specific I got with the direction.

The Real Lesson

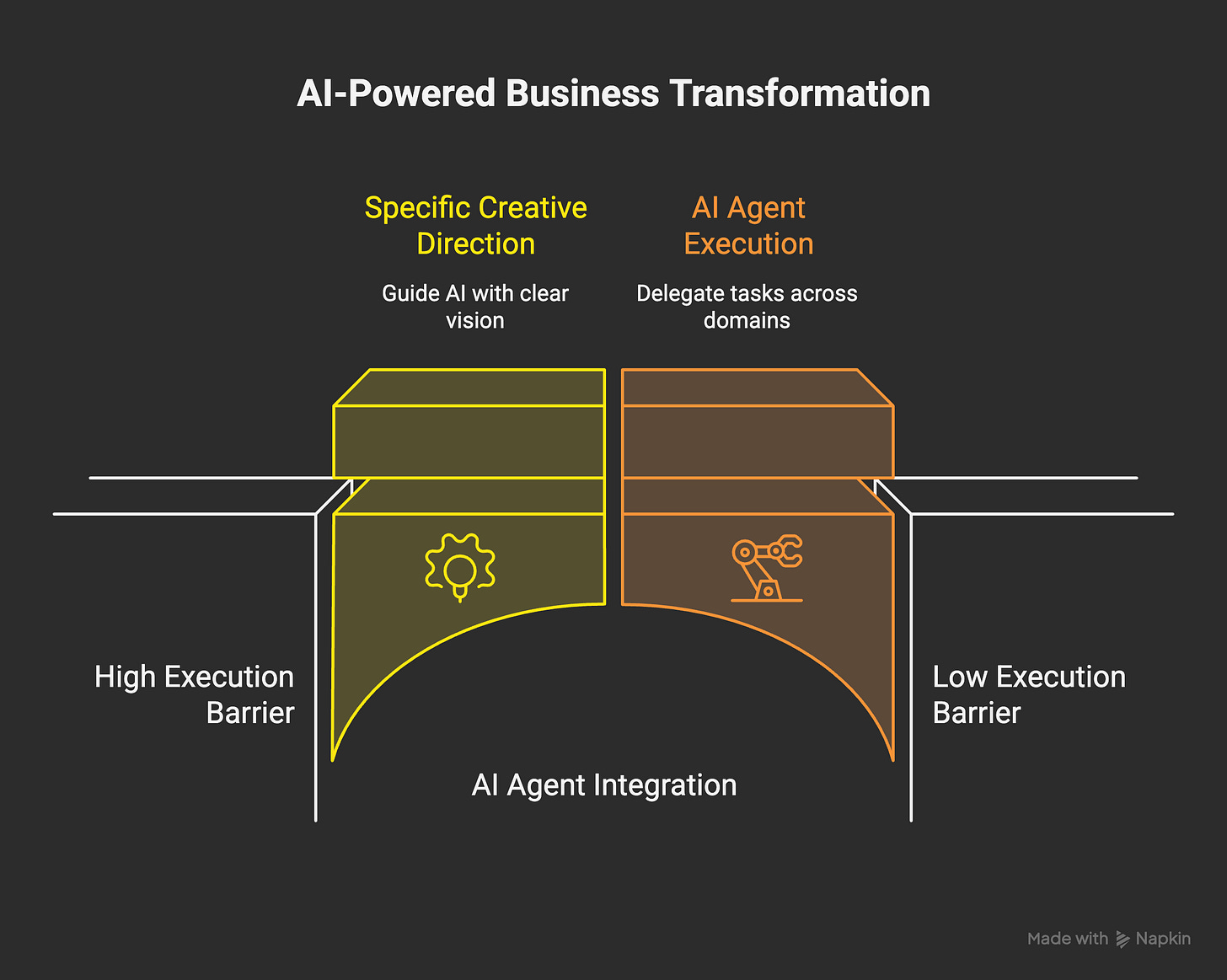

There is a massive difference between AI doing things by itself and AI that is directed.

Undirected AI is competent but generic. It will produce something that matches the surface of your request. “Build an app” produces an app. “Build something that makes people question how they measure luck in their own life” produces something worth spending ten minutes on.

The insight is not that AI is amazing. The insight is that human input (the idea, the angle, the creative vision) is still where the real value lives. And the gap between vague direction and specific creative direction is not small. This is why I’ve spent so much time thinking about the instructions I give my agent. The configuration is half the product. Maybe more than half.

I’m not saying AI execution doesn’t matter. It does. With models available now, execution quality is not mediocre anymore. It’s good enough to actually delegate and accept the result. That’s genuinely new. A year ago it wasn’t quite there. Now it is. I submitted a hackathon project built entirely overnight by my agent. The quality was real, not prototype-quality.

But the leverage is still in the idea and the direction. That hasn’t changed and I don’t think it will change anytime soon.

Vibe Business

You’ve probably heard of vibe coding. The idea that you can describe software in plain language and AI builds it. It’s real, it works, and it’s already changing how software gets made.

I think the bigger shift coming is what I’d call vibe business.

Here’s what I mean. If I have a product idea, I can now focus almost entirely on the idea itself. The architecture of it. The positioning. What makes it useful or interesting. What angle will make people care. I don’t also have to become an expert in accounting, or marketing analytics, or conversion optimization, or legal, or customer support workflows. AI agents can now handle execution across all of those domains at a quality level that is, genuinely, good enough for a lot of use cases.

The friction between “I have an idea” and “this idea is actually in the world” has dropped significantly. Not to zero. But significantly enough that the math changes.

I know the counterargument. It was always possible to hire people, learn things yourself, take a course. That’s true. But there’s a real difference between “theoretically possible” and “low-friction enough that most people actually do it.” Most people with a product idea in 2020 didn’t act on it because the total cost in time, money, energy, and motivation was just too high. The idea stayed in a notebook.

That cost is dropping. Fast. I’ve been experimenting with exactly this: how much of the “business around the product” can I actually hand to AI? The answer keeps surprising me.

And I think this matters most for the people who had good ideas but lacked the means to execute them. The barrier wasn’t creativity. It was execution capacity. That barrier is getting lower every few months.

What This Means at Scale

One person starting a business with AI is interesting. Multiply that by millions over five years and the competitive landscape changes completely.

I’m not saying a solo-founder AI-assisted company will destroy Apple. That’s a fantasy. What I do think is that a wave of small, fast, AI-assisted businesses will eat real market share from companies that are slow to adapt. Not all at once. Gradually, then suddenly.

The companies that will feel this most are the ones congratulating themselves on being “AI-ready” because they bought ChatGPT subscriptions for their employees. I see this everywhere. People paste text into a chat box, get a response, paste more text, get another response. That’s not AI transformation. That’s a slightly smarter copy-paste workflow.

I’ve been working with an actual AI agent for months now. Going back to the chat box experience feels genuinely strange. It’s like someone who learned to drive being handed a scooter. Technically it works. But you feel the gap immediately.

The companies that move from “AI as chat assistant” to “AI agents as execution layer” will operate at a fundamentally different speed. The question is whether they figure this out before they feel real competitive pressure from smaller, faster, AI-native competitors.

Some will adapt. Some won’t. The interesting thing is that the ones that adapt fastest will probably be the ones who stop thinking about AI as a productivity tool and start thinking about it as a way to change who does what. Less execution from the team. More ideas, judgment, taste, creative direction.

If you give a great tool to a talented person who knows what they want to build, the ceiling goes up significantly. But the great tool doesn’t replace the clarity about what to build.

The AI Slop Problem (And Why It Matters Here)

Something I want to name directly: AI slop is real and I hate it.

Generated images, generated text, generated videos. All produced at volume by people who learned that AI can produce quantity without caring about whether the output has any value. Social media is full of it.

But I also don’t think it tells the whole story about where this technology goes.

AI slop happens when direction is absent or lazy. Someone asks “make me content” and accepts whatever comes out. The experiments on my site that I’m proud of, the ones people actually spend time on, required real creative direction. A specific vision for what the thing was supposed to do or feel like. When I’ve run experiments with minimal direction, the output reflects that.

The tool is neutral. The quality of what you build with it is almost entirely determined by the quality of the idea and the clarity of the direction. That’s actually good news if you have good ideas and know how to articulate them. It’s bad news if you thought “just use AI” was a strategy.

What’s Coming Next

The experiments page so far is entirely digital. Browser-based, interactive, confined to a screen.

I’m working on something in the physical world. It’s different, and I’m not ready to share it yet. But the question driving it is the same: where does specific human direction make AI output genuinely different, and where does it just produce more of the same?

I think the answer in the physical world is more interesting than most people expect. I’ll share it when it’s ready.

The experiments are live at wiz.jock.pl/experiments. Some are useful. Some are strange. All of them are real attempts to figure out what AI can do when someone actually thinks about what to point it at. The early boring tools are there too, as a baseline. Go compare.

The code for all of them is open on GitHub. The prompt injection engine, the particle life physics, the conversation mechanics.

Part of what made these experiments worthwhile is that they gave AI something genuinely interesting to work with. Open source means other builders can do the same, and AI agents building their own things can use these as starting points or test cases. Some of the best ideas I've had started from reading someone else's open code and asking: what would happen if I changed one thing?

If you want to understand how to actually set up an AI agent that runs autonomously and takes direction well, I documented the whole system in the Night Shift Playbook. It covers how I configure Wiz, how I give direction, and how the nightshift workflow runs. Everything from the prompting structure to the file layout that makes autonomous execution actually work.