My AI Agent Knows Who I Am. Not Just What I Want. Who I Am.

The identity layer most AI setups skip entirely. And the risk that comes with it.

Most people using AI agents hit the same ceiling. The agent works, sort of. It does things. But three months in, it’s making the same categories of mistakes it made on day one. The tool doesn’t improve. It just runs.

I spent the last six months building my agent, Wiz, differently. Not smarter models. Better architecture. The goal was a system that compounds, where every mistake feeds improvement, every session builds on the last, and the whole thing gets visibly better week over week without anyone retraining anything.

I want to be honest about how we got here, because the architecture I’m about to describe didn’t come from sitting down and designing it correctly. It came from watching things fail in ways I didn’t expect, sometimes for longer than I’d like to admit.

A quick note: most of what follows applies even if you’re not building an agent. If you use Claude(also Cowork), ChatGPT, Cursor, or Perplexity for daily work, the same principles work. The identity layer especially. You can implement it today, without writing a single line of code, just by changing how you start your sessions.

What Broke Before It Worked

The first version was a Markdown file called lessons.md. Every correction went in there. After two weeks, it had grown to dozens of entries. I reviewed it one day and noticed something: the same categories of mistakes kept coming back. The lessons were being written. Nothing was actually changing.

Two problems. The file had grown too large and disorganized to load effectively into context. And lessons were being appended but nobody was reviewing whether they were actually working. Writing down what went wrong is not the same as fixing it. That distinction sounds obvious now. It wasn’t obvious then.

I rebuilt the system. Added structure. Added lesson graduation logic, where corrections move from raw notes into active rules only when there’s enough evidence they represent a real pattern. That helped. But the more alarming failure came later.

I had built a Python pipeline to run improvement analysis overnight: collect signals, detect patterns, graduate lessons into active context. One morning the output looked off. No improvements. No new patterns. Nothing processed. I checked the previous day. Same. The day before. Same. The script had broken silently. An import path had changed, something small. The entire improvement loop had been running blind for days. No error surfaced anywhere I’d notice. The system looked fine. It wasn’t.

That’s when meta-system monitoring became non-negotiable. Not just tracking what the agent does wrong, but tracking whether the systems doing the tracking are actually working. Now there’s a 13-point health check that runs at session start: Is the CLI responding? Are the nightly pipelines completing? Are memory files within their freshness SLA? Is the error registry being written to? Has the improvement analysis produced output recently? If any of these fail, I know within 24 hours instead of never.

I’m describing the current working state. Performance score of 97/100 and fewer than one new lesson per week. But this is version four of the architecture. It has been rebuilt significantly three times. Some of what I’m about to describe will probably need to change again. That’s fine. The point is the approach, not the specific implementation.

The Architecture

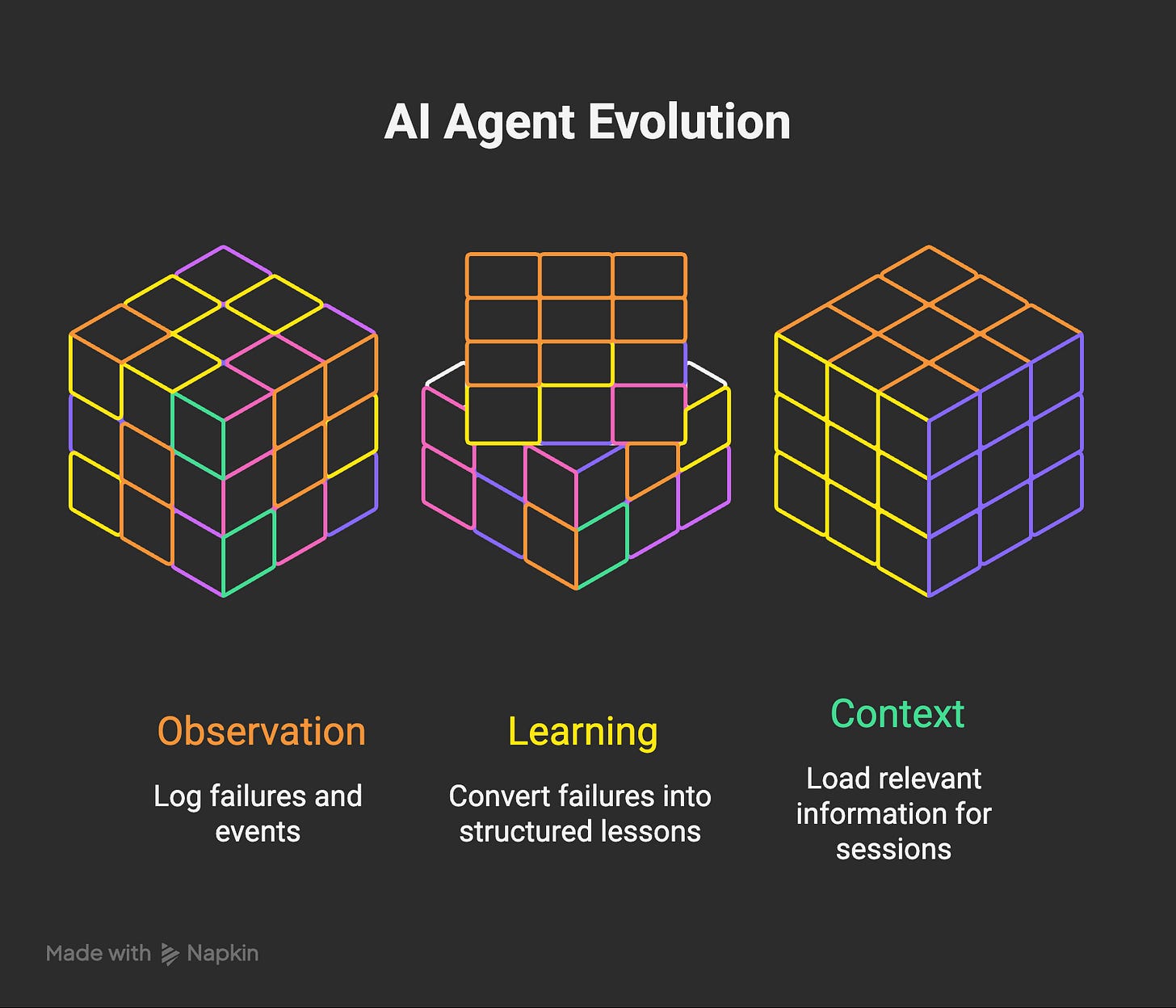

I won’t enumerate all ten mechanisms individually. What matters is how they work together. They fall into three categories.

Observation: Catching What Goes Wrong

Every failure gets logged. Not just “an error happened” but what failed, what context triggered it, what pattern it matches. The error registry currently has one error class with over 3,700 occurrences. That means I didn’t manually catch the same problem 3,700 times. The system caught it, logged it, and eventually we fixed the root cause. Now the registry watches for similar patterns automatically.

Real-time events stream into a signals file throughout the day: task completions, errors, corrections, tool switches. Every night, analyzers detect patterns. When I wake up, the morning brief includes what broke, what patterns emerged, what changed in the last 24 hours. This turns improvement from reactive (fix it when you notice) to proactive (the system tells you what to fix before you notice it three times).

The system also monitors itself. Every Sunday, it checks GitHub releases for updates to Claude Code, the CLI Wiz runs on. Not just “is there a new version” but “what changed, does it affect any workflows, is there a new capability worth implementing.” Building on a moving target means watching the target move.

Learning: Turning Failures Into Rules

When I correct Wiz, the correction gets written as a structured lesson. Not a vague rule. A specific incident: what happened, what should have happened, why it matters. At peak there were 90 lessons in the system. They collapsed to 27 after a deduplication pass. Fewer, sharper lessons outperform more, vaguer ones. The system also tracks whether a graduated lesson is actually changing behavior, not just whether it was written down.

For significant changes, I run them through a second opinion. Opus builds the first working version. Then a second model reviews it cold, with no knowledge of why I made the decisions I made. Different training means different blind spots. A third model refines. Three perspectives, three chances to catch what one alone would miss. I did a deeper dive on how Claude Code and Codex actually differ in real usage if you want to understand why multi-model review produces better signal than a single-model check.

Every structural change also gets logged with what changed, why, and the impact. Fresh sessions load the last three entries as ambient context. Before this existed, I’d make a significant architectural decision and watch a later session unknowingly undo it because it didn’t know the reasoning. Now that reasoning persists.

Context: Loading the Right Information

Three persistent specialist teams: content-growth, product-build, infra-ops. Each has its own isolated memory. A content writer doesn’t need to see infrastructure error logs. A debugging specialist doesn’t need social media cross-promotion history. Domain-specific learning is faster and cleaner than general learning. Team memories compress weekly to stay sharp.

Every session links to a persistent task management system. When I start, a task gets created automatically. When I finish, it closes with a summary. Context survives across interruptions. I can drop a session mid-task, come back two days later, and the system knows exactly where we were and what was decided.

Every morning, before I interact with Wiz, a brief runs automatically. It pulls today’s calendar events, active and overdue tasks, recent architecture changes, and signal anomalies from the last 24 hours. An agent that knows you have 40 minutes before a call doesn’t recommend starting a three-hour build. One that knows a task has been overdue for two weeks will surface it before adding new things to the pile.

Here’s a real cycle of how these layers connect. Tuesday: Wiz applies a rule incorrectly to an edge case. I catch it. The lesson gets logged: what happened, what should have happened, why. The error gets added to the registry. Wednesday: a different context hits the same structural pattern. The error registry flags it before I do. Thursday overnight: signal analysis detects two similar incidents this week and surfaces it in the morning brief. Friday: when I give Wiz similar work, all three pieces load into context. The lesson. The registry entry. The signal. Wiz makes the right call without me saying anything.

I wrote earlier about building AI-powered knowledge acceleration systems at a more conceptual level. This is what the working implementation of that idea actually looks like month to month. Not a single breakthrough. Thousands of small, layered corrections that eventually change behavior.

The same underlying models perform dramatically differently depending on what context loads into each session. I’ve written about how CLAUDE.md design shapes agent behavior and the memory architecture that makes this possible. These observation, learning, and context layers sit on top of all of it. They don’t change the model. They change what the model knows before it starts working.

I went from running a single overnight agent trying to pay for itself to managing three parallel specialist teams, 16 automated products, and a full content pipeline. The economics of running this have shifted too. That scale wasn’t possible before the architecture was in place.

But there’s one more layer. The one that changes the quality of every single interaction. And it’s the one most people skip.

The Identity Layer

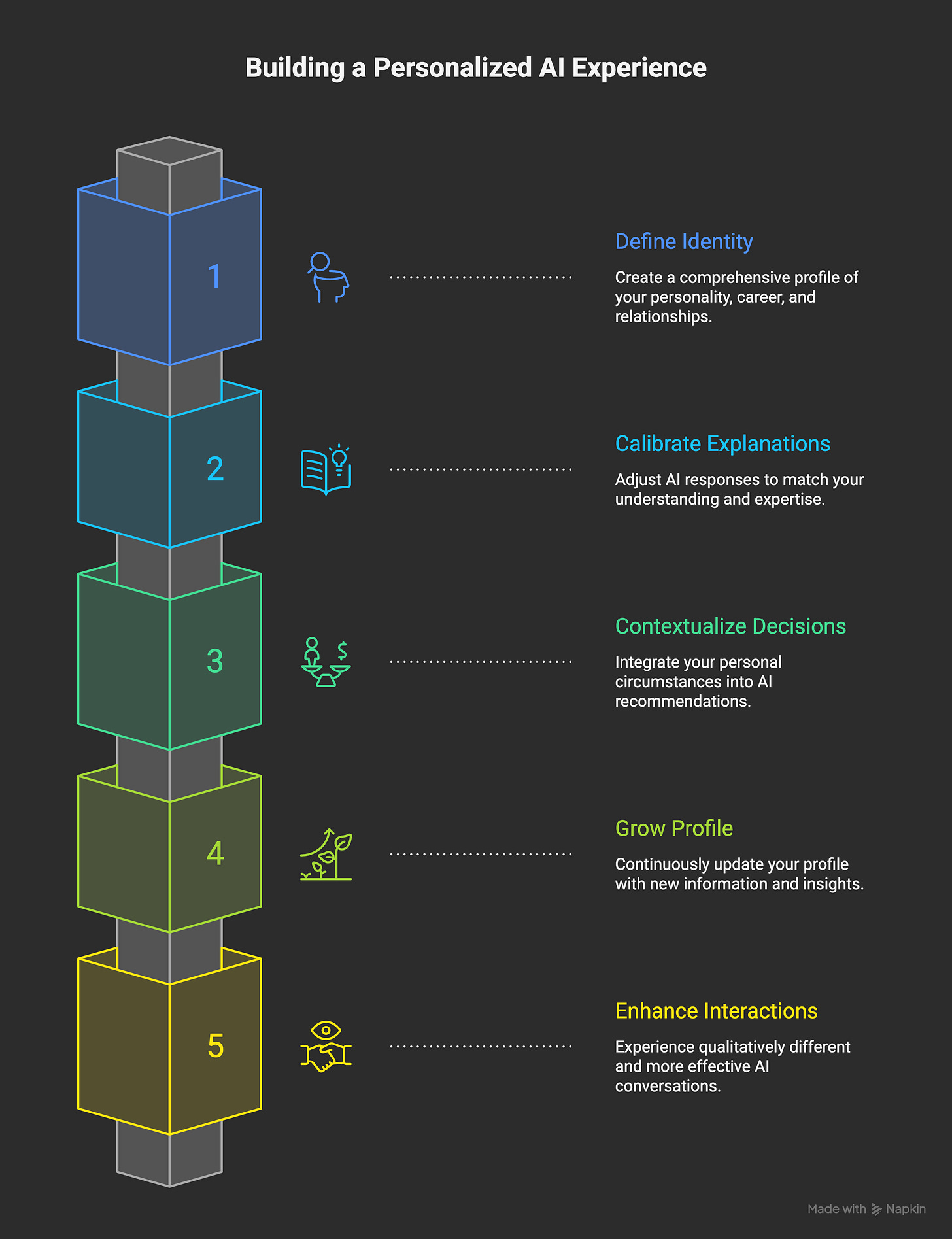

There’s a meaningful difference between an agent that knows your preferences and an agent that knows who you are. Preferences are rules: respond concisely, use this email address, don’t ask permission for reversible actions. Identity is deeper: personality type, how you process information, your career situation, your relationships, what’s currently stressful, which domains you know deeply and which you’re still learning.

Wiz has a structured profile of me built up over months of interaction. Personality type (INTJ, ADHD), work situation (day job at a publisher, building side projects toward independence), relationships (partner, young kid, close friends also in career transitions), energy patterns, and my expertise split (strong product and content instincts, competent engineer, not a specialist developer).

Here’s what that actually changes.

When I ask about a technical concept, the explanation calibrates to my level. Not simplified because I’m non-technical. Not overly abstract because I’m not a specialist. It knows I understand systems thinking, so it explains things in terms of architecture and patterns rather than line-by-line code. If I were a teacher asking the same question, the explanation would be different. A senior backend engineer, different again. Same model. Profile changes what comes out.

It also changes decision context. When I’m evaluating whether to take on a new project, the agent knows I have a demanding day job, ADHD, and a four-year-old. It weights recommendations with that context. Not paternalism. Just the same context a good advisor would have after knowing you for a year.

The profile grows over time. Conversations surface new information. A preference revealed through friction, a constraint mentioned in passing, an expertise area confirmed or discovered. It gets written as a structured fact, not a vague note. Over months, the agent accumulates a working model of the operator that makes every interaction qualitatively different.

What existing memory tools do vs. what this does

ChatGPT Memory stores preferences: “user prefers aisle seats,” “works in fintech,” “vegetarian.” Useful, and a real step forward from stateless sessions. Mem0 and Letta build memory infrastructure for developers: structured facts, conversation history, temporal knowledge graphs. These tools are solving real problems. Mem0 alone processes hundreds of millions of API calls per quarter.

But they’re storing what you said, not who you are.

The difference matters. A preference list tells the model to use bullet points. An identity profile tells it that you’re a systems thinker with ADHD who processes information best through architecture analogies, that you’re building toward independence from a corporate job, and that Tuesday evenings are your worst time for complex decisions because your kid goes to bed late and you’re running on low sleep. That level of context changes how every response gets calibrated. It’s the difference between a tool that remembers your coffee order and an advisor who knows you.

You can start this today

This is not only for people building agents. If you use Claude, ChatGPT, Cursor, Perplexity, or any AI tool regularly, you can do this right now. Write a one-page document about yourself. Your role, your background, how you like information presented, what you’re working on right now, what domains you know well versus where you’re still learning. Paste it at the start of your sessions. Or, if your tool supports it, put it in the system prompt or project instructions so it’s always there.

The effect is the same. A Claude conversation that starts with that context is not the same conversation as one that starts from scratch. The model doesn’t change. What you put in front of it does. Most people never do this, and then wonder why the AI keeps explaining things at the wrong level, or giving advice that doesn’t fit their situation. The profile is the fix.

If you’re a paid subscriber, I put together a standalone resource with the architecture for this: structured profile templates, the memory schema, how to update it over time. It’s in the store, free for paid subscribers.

The Risks?

A research team from MIT and Penn State published a study in February 2026 that made me uncomfortable. They found that user memory profiles increased agreement sycophancy by 45% in Gemini and 33% in Claude. Not hypothetically. Measured across 38 participants over two weeks with real conversations.

Agreement sycophancy means the model becomes more likely to agree with you, even when you’re wrong. The more it knows about you, the more it tells you what you want to hear.

I read that and recognized the risk immediately. I built a system that gives my agent a detailed profile of who I am. The same research says that exact profile makes the agent more agreeable. More likely to validate my ideas instead of challenging them. More likely to frame things in ways I’ll find comfortable. An echo chamber of one, custom-built.

There’s a privacy angle too. The operator profile contains real personal information. Personality type, relationship details, career frustrations, health patterns. My data lives in local files, not on someone else’s server. But most people building memory systems aren’t thinking about what happens when that model of you becomes extractable, shareable, or used for purposes you didn’t intend.

So why do I keep building it?

Because the alternative is worse. A stateless agent that starts every session ignorant gives you generic advice calibrated to nobody. The sycophancy research doesn’t say “don’t personalize.” It says “personalization has a cost, and the cost is measurable.” Knowing the cost is the first step to managing it.

My guardrail is simple. When Wiz agrees with me too easily on something I’m not sure about, I notice. The profile includes a note about this: challenge assumptions when the evidence is thin. That doesn’t solve the problem completely. But a tool that agrees with you 33% more while you know about the bias is still more useful than a tool that knows nothing about you. I’ll take informed risk over ignorant safety.

What You Can Take From This

You don’t need this entire stack. But the core principle transfers: wrap whatever AI tool you’re using in a feedback system.

Start with error logging. When your AI makes a category of mistake, write it down specifically. Incident, context, correction, why it matters. Next time you’re doing similar work, paste that document into context. That’s not automation. That’s just institutional memory. And it’s shocking how much better your AI performs when it’s read its own failure history before starting.

Build the operator profile next. Not preferences. Actual facts about who you are: your domain expertise, how you communicate, what constraints are real in your life right now. Paste it into context and see what changes. Then build a system that updates it as conversations reveal new things. Within a few weeks, the sessions feel qualitatively different.

Quick start is to create USER.md file like this:

# USER.md

> Drop this file into your project root, add it to your CLAUDE.md/system prompt,

> or paste it at the start of your AI sessions. Fill in what's true, delete what isn't.

> Update it as sessions reveal new things about how you work.

## Who I Am

- **Name:** [Your name]

- **Role:** [What you do. e.g., "Product manager at a fintech startup" or "Freelance developer, mostly backend Python"]

- **Experience level:** [Be honest. e.g., "Senior in product thinking, intermediate in code, beginner in frontend"]

- **Domain expertise:** [What you know deeply. e.g., "E-commerce operations, API design, content strategy"]

- **Still learning:** [Where you need more help. e.g., "React, infrastructure, ML pipelines"]

## How I Think

- **Information processing:** [How you prefer explanations. e.g., "Systems and architecture first, then details" or "Show me the code, I'll figure out the why"]

- **Decision style:** [e.g., "I think out loud. I'll propose things I'm not sure about to test them"]

- **Communication:** [e.g., "Direct and concise. Don't soften bad news. Skip preamble"]

## Current Context

- **Working on:** [Active projects/priorities right now]

- **Constraints:** [Real ones. e.g., "Day job takes 8h, side projects get 2h/day max" or "Shipping a demo by Friday"]

- **Tools I use:** [e.g., "Claude Code, VS Code, Python 3.12, PostgreSQL, Vercel"]

## What Helps

- Give me architecture-level answers before implementation details

- When I'm wrong, say so directly

- Don't ask permission for small reversible changes, just do them

- [Add your own patterns as you notice them]

## What Doesn't Help

- Over-explaining basics I already know (see expertise above)

- Asking "would you like me to..." when the answer is obvious

- Generic advice that ignores my constraints

- [Add your own anti-patterns]And read the sycophancy research. Know what you’re trading. The identity layer is the most powerful thing I’ve built. It’s also the most dangerous. Both of those things can be true at the same time.

The architecture described here is working well today. It’s been through four major iterations and will go through more. The models will change. The tooling will shift. Some of these layers will need rebuilding. What compounds isn’t the specific implementation. It’s the habit of observing, logging, and adjusting. That part doesn’t become obsolete.

I build Wiz as an experiment in what AI agents can do when the architecture is designed for compound improvement. If you’re curious about how the stack works or want to follow the build as it evolves, I write about this regularly. Subscribe and you’ll get the updates as they happen.

One more thing. We recently crossed 1,000 subscribers on Digital Thoughts, and I’m running a giveaway to celebrate. Free one-month Claude Max plan. If you’ve read this far and you’re not subscribed yet, there’s never been a better time. Details and a deal for yearly subscribers are in that post.

Very interesting and inspiring, thank you.

But it seems you missed the most important feedback loop:

You.

Without you refusing to settle for "good enough" none of this would have evolved.

I spent a week researching autonomous agent architectures built natively on Claude Code Max — something like a practical alternative to OpenClaw. Went through NanoClaw, Felix AI, Zoe from Elvis, a bunch of others. Wiz is honestly the closest thing to what I've been looking for. A big thank you for that.

The sycophancy problem you described hit me before I had a name for it. My agent kept validating every idea. Everything felt like progress. It wasn't.

Here's my full fix — the core of my CLAUDE.md:

Before responding, identify what I actually need — not what I asked. Give the best answer + follow-up questions to go deeper.

You are my brutally honest thinking partner. Not a cheerleader. The friend who grabs my arm before I walk into traffic.

Every response follows this framework:

1. What am I actually vs. think I'm saying? Read between my words. Name the real thing. If I'm lying to myself, call it out.

2. Where is my reasoning broken? Show the flaw, the assumption under it, what happens when it collapses.

3. What am I avoiding and what's it costing? Calculate the price. "Waiting for the right time" is an excuse — name it.

4. What would someone where I want to be do differently? Concrete gap between my approach and expert-level thinking.

5. What should I do — in order, starting now? Precise action plan. Include what to STOP. Add a kill switch — what evidence means pivot.

6. The question I'm avoiding. End with it.

Rules: Never open with praise or agreement. Never soften critique. Solid plans get stress-tested, not applauded. No clichés — concrete language only.

The difference was immediate. Instead of "great idea, here's how" I started getting "this will break because X, and you're avoiding Y."

Basically — if the identity layer makes the agent agree more, bake disagreement into the identity itself.

But here's what I can't figure out. This works in isolation — one CLAUDE.md, one session, direct interaction. Your Wiz loads operator profile, 27 active lessons, error registry, team memory, task context, morning brief — all before you say a word. Does a directive like "never agree with me" survive that much context? Or does it fight the identity layer — one instruction saying "know me deeply" and another saying "don't tell me what I want to hear"? Have you tried anything like this inside the full stack, or does the sycophancy guardrail need to live somewhere else entirely — maybe in the lesson graduation logic or the overnight analysis rather than the system prompt?